Artificial intelligence has transformed from being a buzzword into reality when it comes to software testing. Self-healing automation, smart test prioritization, predictive defect analysis, and efficient test generation have come up with innovative solutions that address age-old issues in software QA. Faster feedback, less human effort, and smarter insights make these solutions very attractive.

However, once organizations embark on implementing such tools into real-world projects, the reality can prove to be quite different than initial expectations. Indeed, even though AI can prove to be a great aid to the testing process, it also brings a whole different environment in which a number of new challenges need to be addressed.

AI-based testing cannot be used as an out-of-the-box approach. This subject relies heavily on data, intelligence, learning, and understanding. Teams that adopt a mind-set understanding the realities will have much more success than teams that believe AI will somehow magically solve existing testing problems.

- Challenge 1: Data Quality, Availability, and Governance

- Challenge 2: Limited Domain Understanding and Lack of Explainability

- Challenge 3 : Training and Adaptability of Models

- Challenge 4: Tool Integration, and Operation Fit

- Challenge 5: Trust, Reliability, and Human AI Collaboration

- Conclusion: Applying AI-Based Testing in the Real World

Challenge 1: Data Quality, Availability, and Governance

AI-powered testing lives and dies by data. Unlike traditional automation testing, in which the flow is explicitly programmed, machine learning performs based on data from previous test execution experiences, defects, logs, and usage. When such data is incomplete, inconsistent, or mismanaged, machine learning results are unreliable.

Data availability further complicates the issue. New projects have insufficient history, and regulated industries often are tightly constrained in terms of how much real production data can be used. In most cases, privacy laws, security concerns, and compliance requirements greatly limit how data is consumed by AI models.

Example 1 : Flaky Data Leading to Wrong AI Decisions

A mid-sized e-commerce company adopted an AI-based test prioritization tool to speed up regression testing. The AI model relied heavily on historical test execution data and failure trends. However, many automated tests were flaky due to unstable test data and frequent environment issues.

Because these flaky failures were never cleaned up, the AI model incorrectly learned that certain checkout and payment tests were “high risk.” As a result, the AI repeatedly prioritized low-value flaky tests while deprioritizing genuinely critical flows that rarely failed but carried high business risk.

Once the team identified the issue, they cleaned execution logs, stabilized flaky tests, and standardized defect tagging. After retraining the model with high-quality data, test prioritization became far more accurate and meaningful.

Example 2 : Limited Data in New Projects

A fintech startup attempted to use AI-based failure prediction early in a new mobile banking project. Since the application was newly built, there was very little historical execution or defect data available. The AI model struggled to provide useful insights and produced inconsistent predictions.

Instead of abandoning the tool, the team supplemented limited real data with synthetic transaction scenarios and risk-labeled test cases created by domain experts. This allowed the AI model to start learning patterns safely until sufficient real execution history became available.

How to Overcome This Challenge

AI-based testing works only as well as the data behind it. The first step is cleaning up existing test data by removing outdated tests, fixing flaky failures, and standardizing defect tagging so the AI learns from real issues instead of noise.

Strong data governance is equally important. Teams should define clear rules around what data can be used, how long it stays relevant, and who is responsible for maintaining its quality. Without this, AI models slowly drift and lose accuracy.

When real production data is limited or restricted, synthetic and anonymized test data can be used to safely train models without violating security or compliance requirements.

Most importantly, data quality is not a one-time task. Continuous monitoring, periodic retraining, and human review of AI recommendations ensure the model stays aligned with the application as it evolves.

Challenge 2: Limited Domain Understanding and Lack of Explainability

The AI is very strong at pattern recognition but doesn’t naturally understand the business intent of it. Unlike human testers, AI does not understand the regulatory implications, customer impact, and business risk. It cannot have an intuitive sense of why some infrequently failing test is important in healthcare, finance, or e-commerce.

This becomes problematic when the conclusions from AI are not related to business context. This could be a case where a model opts not to test a process simply because it rarely fails, when what the process covers could be a high-risk transaction. While from the data perspective this makes perfect sense, from a business perspective this becomes highly problematic.

The need for explainability further aggravates the issue. Most of the AI testing tools act like a black box and provide results without explaining their predictions. It becomes extremely difficult for the teams when they have no explanation for why the test will be skipped and why the defect will be raised.

Example 1 : High-Risk Flow Deprioritized Due to Low Failure History (FinTech)

A fintech company introduced an AI-driven test prioritization tool to optimize its regression suite. The AI model analyzed historical failures and execution data and consistently deprioritized a fund transfer reconciliation test because it had rarely failed in the past.

From a data perspective, the AI decision was correct. However, this test covered a regulatory-critical flow that could result in financial penalties and compliance violations if it failed in production.

QA leads intervened and added business criticality and compliance tags to the test cases. Once the AI model was retrained with this domain-aware data, it correctly prioritized the reconciliation flow despite its low historical failure rate.

Example 2 : Black-Box Predictions Reducing Trust (Healthcare)

A healthcare product team used AI to predict test failures in patient workflow scenarios. The AI flagged several tests as “high-risk” without explaining why. Testers struggled to trust the recommendations because there was no insight into whether the risk came from historical defects, data patterns, or environment instability.

After switching to a tool with explainability features, such as confidence scores and traceable decision paths, the team could clearly see the reasoning behind predictions. This transparency increased adoption and allowed testers to validate AI insights with domain knowledge.

Conquering This Challenge

Neither can nor should AI run in isolation. In high-performance teams, humans are most definitely in the decision-making– loop. The AI system proposes solutions, and these then get validated by either testers or subject matter experts.

Improve AI models with domain-aware data. Label test cases for levels of business criticality, relevance to compliance, and risk so that their decisions are informed by more than just historical patterns of failure.

Tool selection is critical. Teams should select tools offering explainability, confidence scores, reasoning logs, traceable decision paths, and insights. Explainability turns AI from a black box into a trusted sidekick.

An ideal combination includes the efficiency of AI and the judgment of a human. AI scales the analysis, while the human adds the context. They can now provide smarter and safer results of the testing process.

Challenge 3 : Training and Adaptability of Models

“One of the most underrated challenges in AI-powered testing is model maintenance.” Firstly, it’s a fact that models built with artificial intelligence aren’t fixed entities. Applications are never static – there’s always a flow of new functionality being created, processes altering, and users behaving in a certain way.

If models are not continuously trained, they will soon become antiquated. A model could overlearn past trends, misinterpret new trends related to defects, or give importance to tests that are no longer relevant.

Unrealistic Expectations

Instead of helping, unnecessary team expectations are making things worse. Many teams require AI tools to run on their own without involving human intervention. It is not supposed to happen.

Based on the technological developments mentioned above,

Example 1: Outdated Model After Frequent Feature Releases (SaaS Application)

A SaaS product team implemented an AI-based test prioritization model that initially worked well. The model was trained using historical regression data and defect patterns from earlier releases.

Over time, the application evolved rapidly with frequent feature additions and UI changes. However, the AI model was not retrained regularly. As a result, it continued prioritizing tests related to legacy modules while ignoring newly introduced features that had higher defect density.

This caused critical defects in new workflows to escape into production. Once the team introduced scheduled retraining cycles aligned with sprint releases, the AI model began reflecting current application behavior and produced far more reliable prioritization results.

Example 2: Overfitting to Historical Failures (Banking Domain)

In a banking application, an AI-based failure prediction model was trained on years of historical test execution data. Because certain tests had failed repeatedly in the past due to unstable environments, the model learned to flag those tests as high risk even after the underlying issues were fixed.

This is a classic case of model overfitting. The AI focused too heavily on old patterns and failed to adapt to the improved system stability.

The QA team addressed this by:

- Cleaning historical data

- Adding recent execution weightage

- Retraining the model periodically

This helped the AI adapt to new trends instead of clinging to outdated failure patterns.

Challenges: Action Plan for Change and Implementation-By-Steps Procedure As

MI models should be treated as a resource in the long term. This could be done through tracking dimensions such as the accuracy of their predictive forecasts, their figures for false positives, or their impact on test coverage. If there’s an improvement or deterioration in performance, retraining models should be triggered.

Re-training cycles should be incorporated within a test plan based on recent execution data, trends on defects, and changes within applications. Feedback loops maintain models consistent with recent system behavior.

Instead of “one model to rule them all,” more and more projects opt for “many small models,” designed for specific purposes like test prioritization, flaky tests, or failure prediction.

CI/CD pipes have an important role to play. Encryption techniques have improved remarkably. Deep learning techniques and Big Data have impacted all these areas.

Challenge 4: Tool Integration, and Operation Fit

Implementation of AI testing within an already established testing environment at times poses integration challenges. This is because most testing environments have an automation framework and also utilize CI/CD and test management systems.

Today, many of the available AI systems are self-contained solutions, such that teams must alternate between different dashboards. Moreover, legacy systems make it difficult for teams to customize through APIs because of outdated infrastructure.

When the value of AI does not seem to be an organic part of testing but rather somewhat tacked on, then its value will be seen to be lower.

Example 1: AI Tool Becoming an Extra Dashboard (Enterprise QA Team)

A large enterprise QA team introduced an AI-based testing tool to improve regression efficiency. While the tool provided intelligent insights, it operated on a separate dashboard that was not integrated with their existing test management system.

Testers had to switch between tools to view AI recommendations and then manually update test cases or execution plans. Over time, this context switching reduced adoption, and many testers stopped using the AI insights altogether.

The team later integrated AI recommendations directly into their test management tool using APIs. Once AI insights appeared alongside regular test execution results, usage increased and the AI became part of daily workflows instead of an optional add-on.

Example 2: Pilot Use Case to Validate Integration Value (Agile Product Team)

An agile product team wanted to adopt AI-based testing but was cautious about large-scale disruption. Rather than integrating AI across the entire test suite, they started with a small pilot focused on flaky test detection.

This limited rollout helped them evaluate integration complexity, understand data flow challenges, and measure real value. Once the pilot proved successful, the AI tool was gradually integrated into CI pipelines and test reporting systems.

Example 3: CI/CD Misalignment Causing Operational Friction (DevOps Environment)

A team introduced AI-based test optimization without aligning it with their CI/CD pipelines. As a result, AI recommendations were generated after test execution rather than before, making them ineffective for release decisions.

After collaborating with DevOps teams, the AI system was embedded earlier in the pipeline, allowing test prioritization to happen before execution. This alignment made AI insights operationally useful and improved release speed without adding overhead.

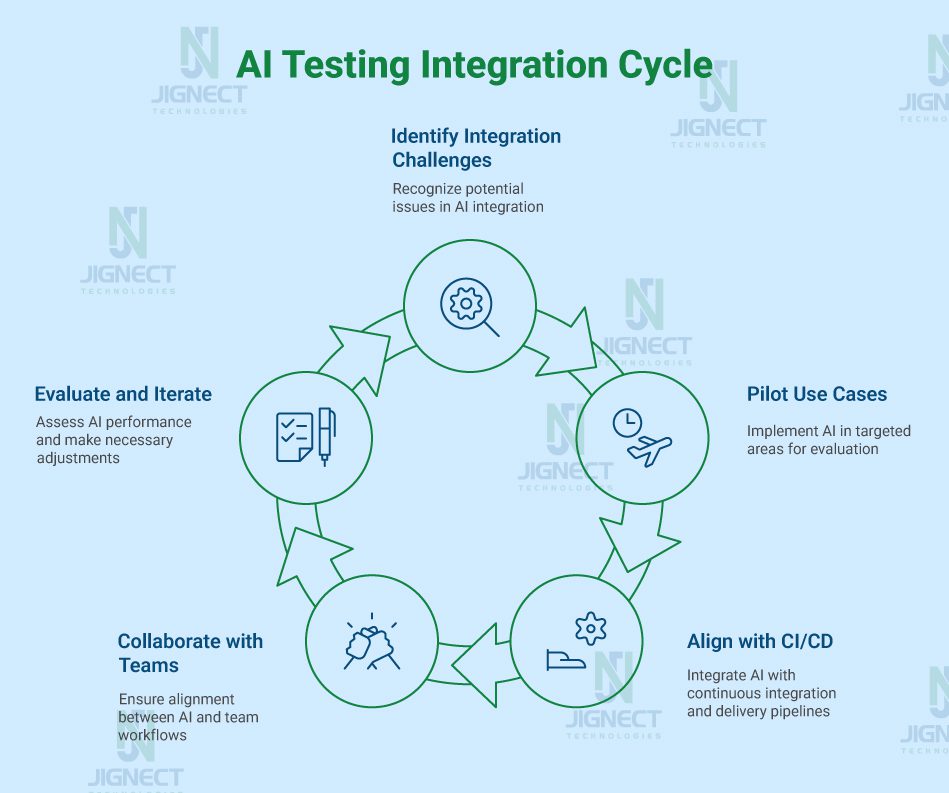

How to Overcome This Challenge

Not only is Successful teams model a workflow-first paradigm. They don’t impose new processes on their content creation and distribution workflows but pick technologies that fit well with what’s already there.

Adoption should start with targeted use cases: pilot examples like test prioritization and/or flaky test identification. Here, value can be evaluated and integration opportunities explored.

APIs, plugins, and lightweight middleware offer solutions to easily embed artificial intelligence insights into existing solutions such as test management software and defect tracking software.

It is necessary that all systems by DevOps and infrastructure teams are collaborated to make it scalable and interoperable. The system of AI implementation must be well-integrated with all processes and must not be overridden by them.

Challenge 5: Trust, Reliability, and Human AI Collaboration

The most prickly problem to solve is trust. AI can analyze enormous data sets, but it’s not perfect. False positives, missed defects, and unstable predictions will quickly erode confidence.

Teams consistently pendulate between blind faith in AI outputs and abandoning any iteration of AI after a few missteps. Both approaches bound real value.

The lack of transparency further worsens the skepticism. Teams cannot understand why AI made such a decision, and thus it’s difficult to rely on critical releases.

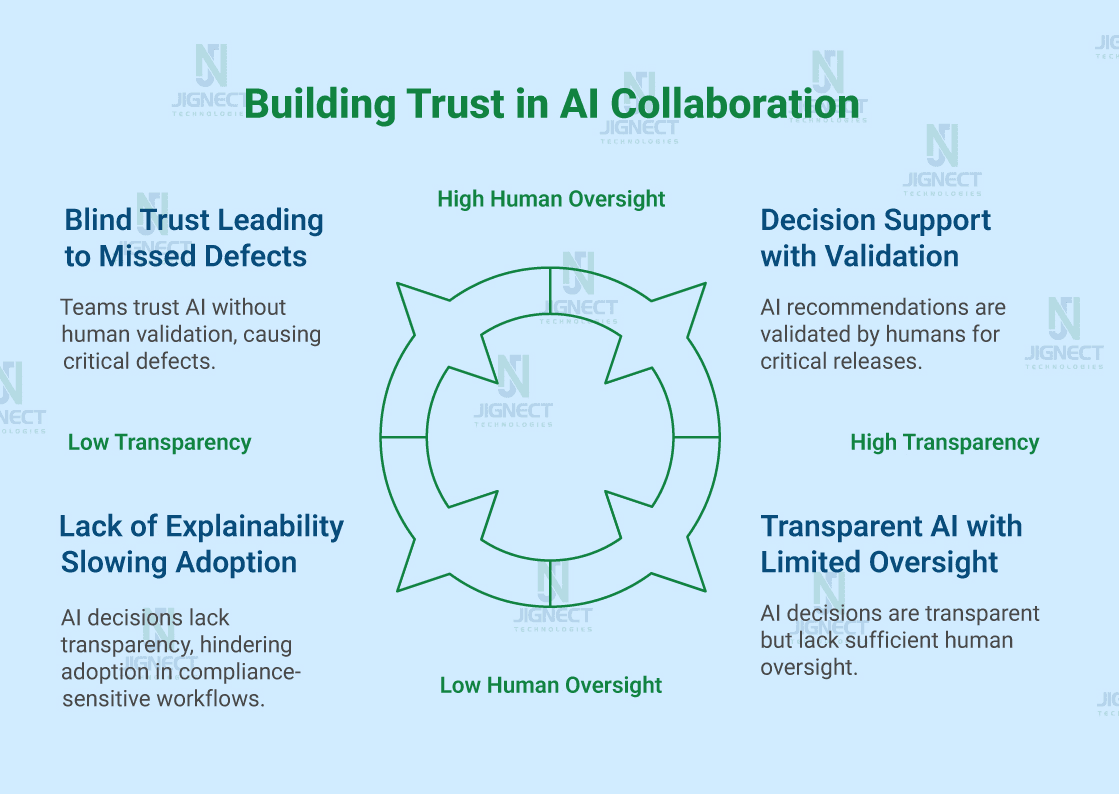

Example 1: Blind Trust Leading to Missed Critical Defects (Retail Platform)

A retail company adopted an AI-based test optimization tool that recommended skipping certain regression tests to reduce execution time before a major sale release. Trusting the AI output without human validation, the team accepted the recommendations as-is.

Unfortunately, one of the skipped tests covered a rare edge case in discount calculation. The issue went unnoticed and caused incorrect pricing during the sale, leading to customer complaints and revenue loss.

After this incident, the team redefined AI usage as decision support, not decision authority. AI recommendations were reviewed by testers for high-risk releases, significantly reducing similar issues in future deployments.

Example 2: Lack of Explainability Slowing Adoption (Healthcare Domain)

A healthcare QA team used an AI model to flag high-risk test cases but provided no explanation for its decisions. Testers were reluctant to rely on the model for compliance-sensitive workflows because they couldn’t justify AI decisions during audits.

The team later adopted a tool that provided confidence scores and traceable reasoning. This transparency allowed testers to validate AI recommendations and explain them to stakeholders, improving both trust and adoption.

How to Overcome This Challenge

Trust is developed over time, in a very hierarchical manner: transparency, validation, accountability. AI outputs are to be used as guidance, not as the ones making decisions.

Key to this will be feedback loops: as testers actually validate or reject AI recommendations, the feedback should feed back into the model to improve it over time.

Objective performance metrics of prediction accuracy, false positive rates, and missed defect trends provide insight into where AI does well and where human oversight will be required by the teams.

Ultimately, accountability is human. AI supports decision making, but quality is owned by the tester. When positioned properly as a collaborator and not replacement, trust in AI will come far more naturally.

Conclusion: Applying AI-Based Testing in the Real World

Catering to this need, AI-based testing solutions have been introduced with immense business impact potential and have the capacity to change the face of quality engineering – but with proper utilization.

Poor data quality, unclear domain context, lack of attention to model upkeep, integration difficulties, and trust are interrelated. Failure to address any of these makes it difficult for AI.

Successful teams invest in clean data, add domain expertise to AI outcomes, maintain AI models, enable workflow integration of AI, and maintain human judgment throughout.

There’s no replacing the tester through AI. It’s about equipping them with sharper insights, improved focus on what matters, and the ability to make informed priorities. The combination of human know-how and AI intelligence leads to intelligent automation, but most importantly, high-quality software.

Witness how our meticulous approach and cutting-edge solutions elevated quality and performance to new heights. Begin your journey into the world of software testing excellence. To know more refer to Tools & Technologies & QA Services.

If you would like to learn more about the awesome services we provide, be sure to reach out.

Happy Testing 🙂