Getting started with K6 is usually simple. write a short script, spin up a few virtual users, and send some basic HTTP requests. But once you start testing real-world systems, that simplicity fades. The scenarios get more complex, the data more dynamic, and you quickly see that the basics aren’t enough.

And this is exactly where K6’s advanced side steps in. So let’s dive into this guide! Let’s break down how to make your tests mirror real user behavior. We’ll explore how to make your tests resilient enough to handle heavier traffic and how to uncover the insights buried in your performance data. By the end, you’ll be equipped to move beyond basic scripts and build tests that truly push your system under real-world conditions.

Why Advanced K6 matters

While K6 is known for its simplicity and performance, basic scripts rarely reflect real-world load scenarios. Advanced scripting empowers teams to simulate realistic workflows, measure SLIs, and analyze system behavior under varying stress conditions.

Advanced K6 matters because:

- Business flows are complex. Real users don’t hit a single endpoint in isolation.

- Test coverage needs flexibility. You must handle branching logic, retries, error tolerance.

- Teams need automation-ready, scalable test code.

Common limitations of basic K6 tests

Basic K6 tests usually:

- Use static data.

- Test only individual endpoints.

- Lack of error handling.

- Don’t simulate user think-time or real branching logic.

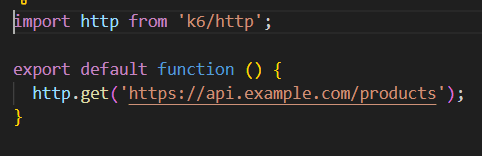

Example:

Above is a good start, but doesn’t represent how users search, filter, or navigate.

Why These Limitations Matter

These gaps aren’t just technical, they directly impact the accuracy and reliability of your performance results. Here’s how:

- Missed slow endpoints: Testing endpoints one by one rarely shows you where the real slowdown lives. The actual bottlenecks usually show up in places like search, filtering, cart updates, or checkout flows things that only surface when a user moves through the application naturally.

- Hidden concurrency issues: Basic tests also gloss over problems that only happen when many users hit the system at the same time. That’s when you start seeing database locks, shared resource conflicts, race conditions, and all the messy realities of real-world traffic.

- Auth failures & rate limits: Without realistic flows, issues like token expiry, login throttling, and API rate limits go unnoticed until they happen in production.

- False confidence in system performance: Your app may look “fast” in basic tests but collapse under real user behavior.

- Inaccurate capacity planning: Teams may underestimate infrastructure needs because they’re not capturing realistic traffic patterns.

Need for Real-World Performance Simulation

Modern systems include login flows, retries, page transitions, and asynchronous behavior. To test real-world conditions, your test should:

- Emulate user paths, not endpoints.

- Include think-time (sleep()).

- Handle different data and dynamic responses.

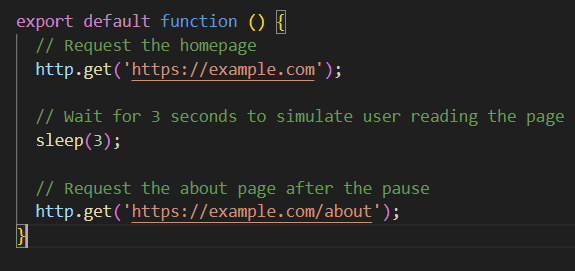

Example:

First, the script goes to the homepage. Then it waits for about 3 seconds kind of like a user taking a moment to check things out. After that pause, it goes and grabs the about page. Adding a sleep() here just helps make the test feel less robotic and more like a real person using the site.

Designing Complex Test Plans in K6

Designing complex performance test plans in K6 means creating realistic, maintainable, and modular test journeys that reflect real-world user behavior. This approach helps your tests closely mirror how people actually use your application, while also making your scripts easier to scale and manage over time.

For running a basic k6 script, refer to our guide: Mastering Performance Testing with K6 – A Guide for QA Testers

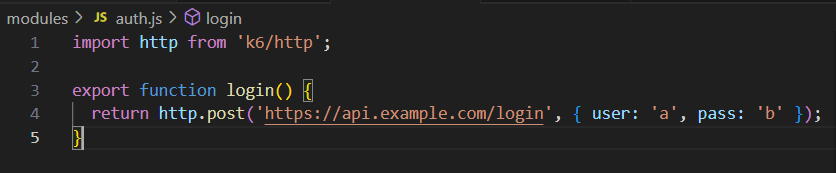

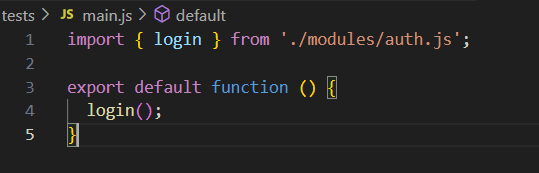

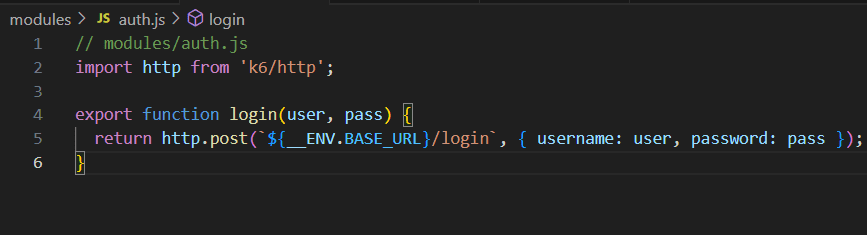

Modularizing Scripts Using JavaScript Modules

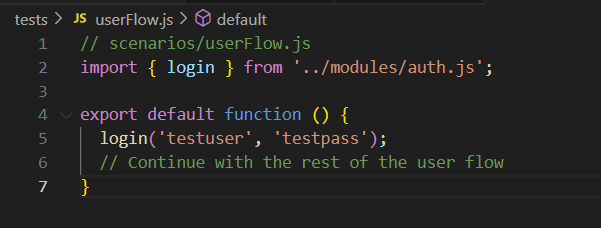

To keep your tests clean and reusable, break logic into separate JavaScript modules. This enables you to isolate steps like login, product search, and checkout into their own files and import them where needed.

Directory Structure:

/tests

main.js

/modules

auth.js

product.jsauth.js

main.js

Using modules makes it easier to share logic across multiple test scripts and maintain them independently.

Why Modular Design Matters

Modular test design isn’t just a “clean code” thing it genuinely makes day-to-day work easier when you’re dealing with growing K6 scripts.

- Faster debugging: When login, search, checkout and other flows sit in their own modules, it becomes much simpler to trace where something broke instead of digging through one large script.

- Less duplicate code: Shared functions like login, headers, token management, or product lookup stay in one place, reducing repetition across scripts.

- Smoother CI/CD integration: Modular tests plug nicely into pipelines because individual modules can be reused, updated, or extended without touching the entire suite.

- Better scalability: As your test suite grows, adding new user journeys or endpoints feels a lot lighter. You just plug in another module without touching everything else.

- Cleaner collaboration: When the logic is split up, teammates can work on different parts of the test plan without stepping on each other’s changes or merging huge files.

Folder Structures for Maintainable K6 Test Suites

Organizing your test files into folders by purpose improves readability and collaboration. A clean structure also makes it easier to plug into CI pipelines.

Suggested Structure:

/k6-tests

/modules → Reusable logic (login, search, etc.)

/data → Store your test data files here, such as CSVs or JSON.

/tests → Individual test flows

/utils → Helpers (e.g., UUID generator)

config.js → Global configurationSuch a layout is essential for scaling test coverage and separating concerns.

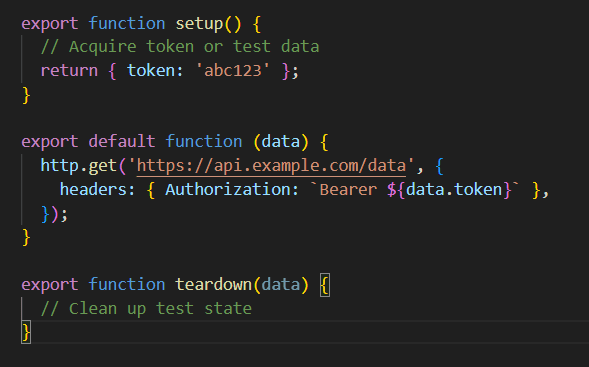

Sharing Setup and Teardown Logic Across Tests

K6 provides setup() and teardown() functions to get your test environment ready and clean up afterward. They’re especially useful for tasks like logging in users or pre-loading data before running your tests.

These lifecycle functions help manage complex test environments and ensure tests run in isolation.

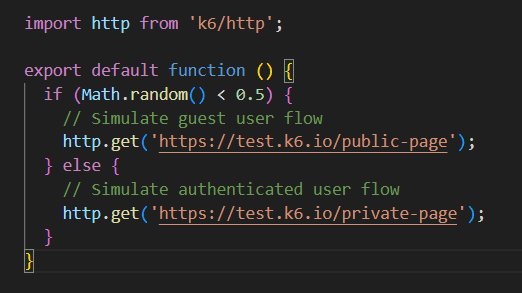

Logic Implementation in K6 (Conditional Execution)

When testing performance in real-world scenarios, users rarely follow a perfectly linear or predictable journey. Some users log in, others remain guests. Some access premium content, others stick to the basics. Conditional execution in K6 allows your test scripts to replicate these diverse behaviors using familiar JavaScript constructs like if/else, switch, or conditions based on API responses.

Conditional execution lets you:

- Split traffic between guest and logged-in users.

- Trigger different workflows based on user roles (admin, user, premium).

- Randomize scenarios to simulate unpredictable traffic patterns.

Example:

Using if, while, and Loops for Dynamic Control

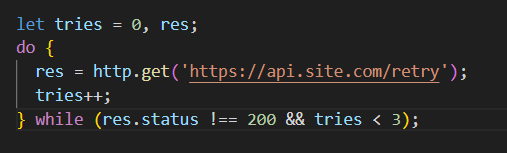

Loops are vital for simulating repeat actions like pagination or retry logic. These can make your test scripts more resilient and aligned with realistic workflows — especially when dealing with APIs that might fail intermittently or respond with paginated data.

Retry Mechanism with do-while

When an API might fail intermittently, retrying can improve test realism and simulate retry logic used in production clients:

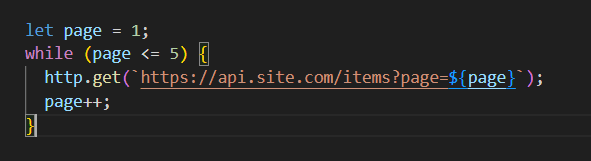

Handling Pagination

Many APIs paginate their responses. Looping through pages helps you test the complete dataset and backend performance under load:

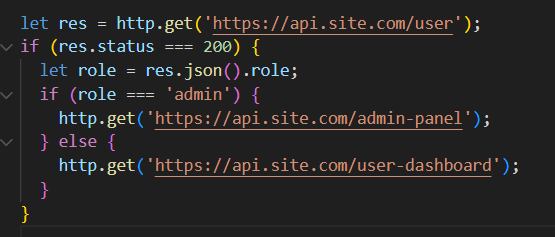

Real-time decision-making based on response values

Sometimes, test behavior should adapt based on what a system returns. For instance, if a user has a specific role or if a request returns a particular status, your script can dynamically respond and branch accordingly.

This helps simulate real application logic, such as showing different dashboards for different user types or triggering additional API calls for specific conditions.

Example:

This dynamic control makes your scripts more adaptive and reflective of actual user behavior.

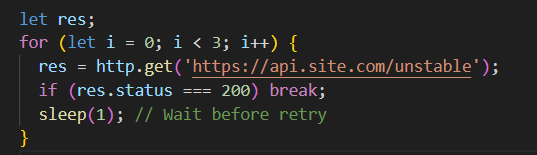

Error handling and retries inside flow control

In real-world environments, network instability and temporary errors are quite common. To build stable test scripts, K6 allows retries and graceful fallbacks using loop-based retries and response checks.

You can combine retry logic with validation tools like check() to log or assert behaviors.

Graceful Retry with For Loop

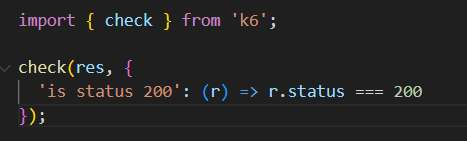

Validate Response Using check()

The check() function in K6 helps you watch how your system behaves while tests are running. If something goes wrong, the test keeps going—it just notes the issue. This way, you can catch problem areas or slow spots in your system without having the test stop on you.

Every failed check shows up individually in the summary, so it’s easier to spot and track issues that keep happening

Timers and Custom Thresholds

Simulating real-world user behavior and validating performance expectations often require more than just sending requests.K6 lets you combine timers, delays, thresholds, and custom metrics to mirror real-world usage and ensure your SLAs/SLOs are met.

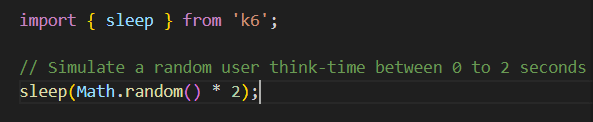

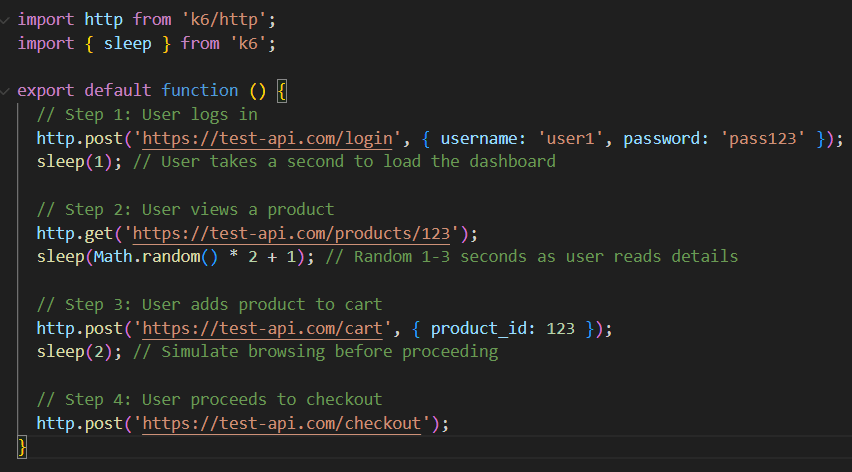

Introducing Deliberate Delays with sleep()

In real life, users don’t instantly click through pages they pause to read, think, or navigate. The sleep() function helps you simulate these realistic “think times” between user actions.

To make your virtual user flows feel more like real users, you can add sleep between steps. This lets your system catch its breath instead of getting slammed by sudden bursts of activity.

For example, a typical user might log in, look around a product, and then complete checkout. Adding pauses between these steps helps mimic that real-world behavior.

This approach spreads out the load more realistically, instead of creating artificial traffic spikes. It also makes it easier to spot and fix performance issues because you’re testing under conditions that reflect how people actually use your system

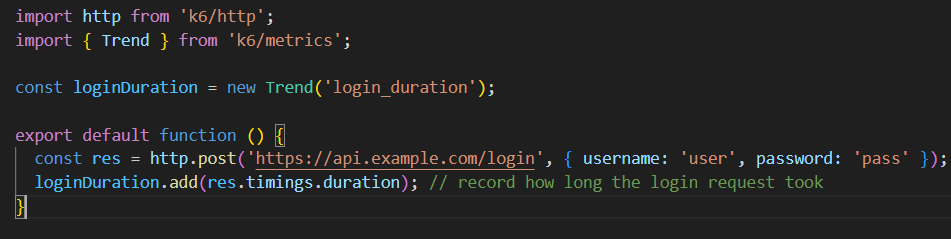

Creating custom Trend metrics for detailed timing

K6 lets you create custom metrics so you can track and analyze the performance aspects that matter most in your test flows. One type that’s used a lot is called “Trend.” It keeps track of values over time, such as how long things take or their size. This helps you look at things like averages and percentiles later on.

For example, if you want to see how long a login request takes:

you also get to set up multiple custom trends, each one designed for a different part of your test. This really helps you zero in on exactly where any slowdowns or bottlenecks might be happening.

Leveraging xk6 Extensions

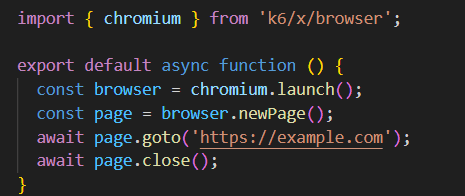

K6 is powerful on its own, but when you need to go beyond HTTP-based testing — such as interacting with browsers, Kafka, or databases — xk6 extensions come into play.

What is xk6 and How to Use It

xk6 is a tool from the K6 team that lets you add custom extensions to the K6 runtime, such as:

- xk6-browser: Run browser-level (frontend) tests using Chromium

- xk6-kafka: Test Kafka systems

- xk6-sql: Run SQL queries as part of your test

- xk6-mqtt: Test MQTT-based messaging systems

They’re especially handy when testing non-HTTP systems or when you need full browser simulation

Setting It Up Locally (Using VS Code or Terminal)

You will build a custom K6 binary that includes your desired extensions.

Step 1: Install Go

Make sure you have Go installed (required to build xk6).

go versionStep 2: Install xk6

Just open a terminal—either your system’s or the one inside VS Code—and install xk6 by running this command:

go install go.k6.io/xk6/cmd/xk6@latestStep 3: Build a Custom K6 Binary

In the terminal, run this command to build a custom version of K6 with the extension you want—for example, for browser testing:

xk6 build --with github.com/grafana/xk6-browserThis will create a new k6 binary file in your directory.

You can now run tests using this binary:

./k6 run your-script.jsIn VS Code, you can do all of this straight from the terminal (View > Terminal). Just make sure you’re in the right folder first.

Using xk6-browser for frontend performance Testing

Once built, you can write scripts like this to simulate real browser interactions:

This is helpful for:

- Testing client-side rendering

- Measuring load times in real browsers

- Capturing frontend failures that HTTP tests might miss

Running and Managing Tests Locally

Running your performance tests locally is the fastest way to iterate, catch issues as they happen, and make sure your scripts work before scaling them in the cloud or CI.

Setting up K6 for Local Testing with Real Data

Testing with static or hardcoded values is fine for basic validation but real-world scenarios demand dynamic and realistic data. K6 supports loading external data sources like JSON and CSV to make test runs reflect actual usage.

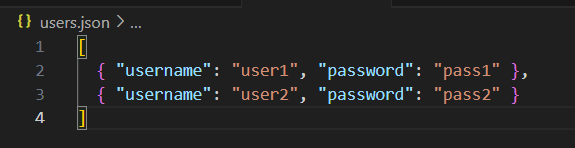

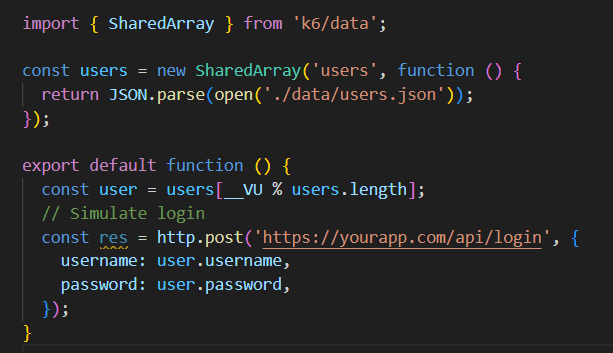

Example: Load user credentials from a JSON file:

Using SharedArray ensures the data is loaded only once and shared across virtual users, improving performance and consistency.

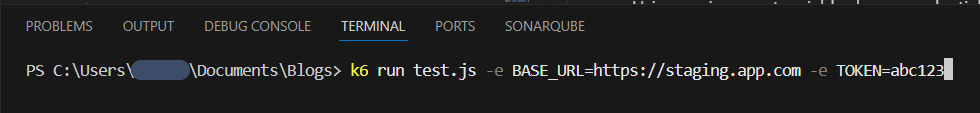

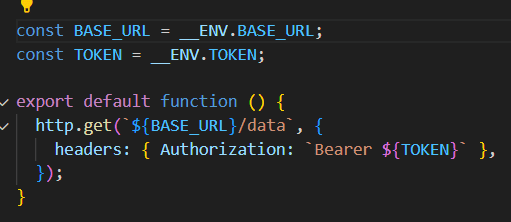

Managing Environments Using CLI Variables

When running performance tests, you usually need one script that can work in dev, staging, UAT, or production. Hardcoding URLs, API tokens, or other environment-specific settings ties the test to just one environment.

K6 makes this easy; you can pass in these values at runtime using environment variables. This keeps your scripts flexible, secure, and easy to use across any environment

The Problem with Hardcoding

Let’s say your test has this line:

http.get('https://staging.app.com/api/data');Every time you want to run this test in dev or prod, you’d have to go in and change the script. That’s annoying and easy to mess up.

Now imagine doing this for:

- Base URLs

- Authorization tokens

- Feature toggles

- Specific user IDs

- Log levels (debug vs. production)

It becomes unmanageable very quickly.

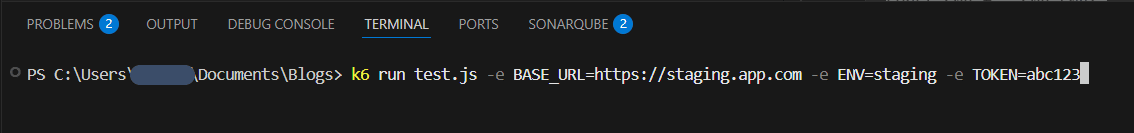

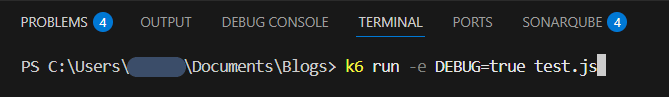

The Better Approach: CLI Environment Variables

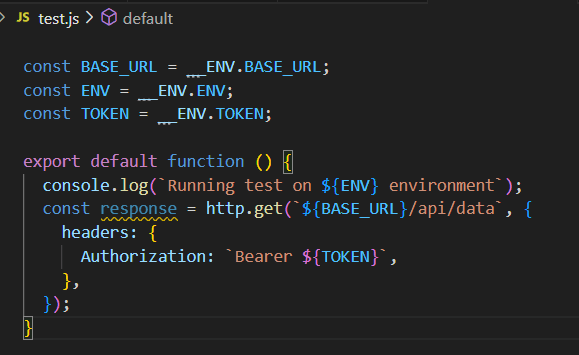

K6 supports passing environment variables using the -e flag. These are accessible inside your script using the __ENV object.

Example:

Your test script can then use those values:

Now, your same script can easily be reused across all environments without modification.

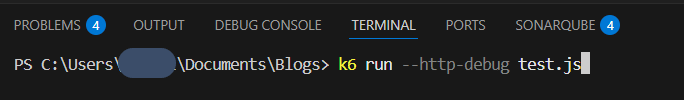

Debugging Tests with –http-debug and Logging

K6’s built-in debugging tools make it way easier to track down failed requests, weird behavior, or messed-up payloads.

Use –http-debug to inspect all HTTP requests/responses:

This flag outputs detailed logs including:

- method and URL

- Request headers and body

- Response status code

- Response body (if small enough)

- Time taken for each request

This is invaluable when you’re unsure why a request is failing or need to inspect backend behavior under load.

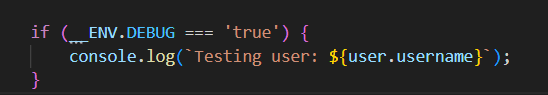

Custom logging with console.log

K6 also supports logging within scripts using console.log(), which can be conditionally enabled for debugging without cluttering normal output.

You can toggle this with:

Just remember, avoid extensive logging in high-load tests, too many logs can slow down test execution and skew performance results.

Best Practices for Scalable and Reliable K6 Testing

Designing advanced K6 tests is about more than just sending requests — it’s about building a maintainable, scalable framework for performance testing that can evolve alongside your application.

Structure tests for scalability and reuse

A good folder structure makes your performance tests way easier to manage. If things are all over the place, scripts get messy, logic gets duplicated, test scenarios can go out of sync, and new team members take forever to figure it out.

Recommended folder structure:

/k6-tests

/modules → Reusable functions for common flows (login, search, checkout)

/data → Stores static files or generated input data in formats like CSV or JSON

/tests → Full user flows designed to replicate authentic interactions

/utils → Helper functions (UUID generator, random delays, time formatters)

config.js → Global constants & defaultsExample: Modular Login Implementation

This modular approach encourages code reuse change login logic once, and all tests are updated automatically. It also makes troubleshooting faster, since any issue in a core function can be fixed in one place. As the project grows, adding new flows becomes easier because the building blocks are already available.

Use Environment Variables to Manage Secrets

Putting things like tokens, passwords, or environment URLs directly into your scripts isn’t just risky from a security standpoint; it also makes it harder to run the same tests across different environments

Example — Passing Variables at Runtime

Environment variables let you keep sensitive details out of your code and make it easy to run the same tests across dev, staging, or production. And when you use a secrets manager like AWS Secrets Manager, Vault, or Azure Key Vault, you don’t risk credentials getting checked into your code or repo.

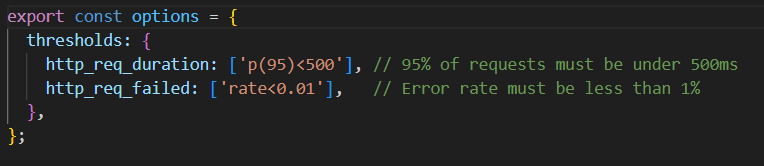

Set thresholds and treat performance like code

Performance tests should tell you right away if something’s off. Adding thresholds to your scripts means they can run in CI/CD and even stop a deployment if the app doesn’t meet the SLA.

Example — Setting Thresholds in K6:

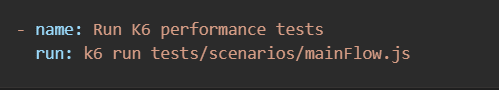

Example — CI Pipeline Step:

By defining clear thresholds, performance testing becomes a gatekeeper in the release process. Different endpoints can have different targets for example, a login request might require sub-300ms latency, while a bulk report export can have a higher threshold.

Checklist for Advanced K6 Tests

Before you run or finalise any advanced K6 script, make sure your test suite covers these essentials:

Script Design & Structure

- Modularized flows (auth, search, checkout, utils, etc.) split into separate JS modules

- Clean folder structure: /modules, /tests, /data, /utils, config.js

- Shared setup() and teardown() for environment prep and cleanup

- No hardcoded URLs, tokens, or config (use CLI -e environment variables)

Logic & Control Flow

- Conditional flows for realistic user journeys (login vs guest, role-based behavior)

- Loops for retries, pagination, and dynamic decision-making

- Error-handling logic using check() and fallback paths

- Sleep() used intentionally to mimic user think-time

Metrics & Thresholds

- Custom metrics (Trend, Counter, Gauge) added for fine-grained insights

- Thresholds defined for SLAs/SLOs (latency, errors, throughput)

- Scenario-level load patterns (ramping, stages, arrival-rate) set correctly

Data & Test Realism

- Dynamic test data loaded from CSV/JSON using SharedArray

- Randomization added where needed (user IDs, product search terms)

- Proper headers, tokens, and cookies reused across flows

Extensions & Integrations

- xk6 extensions used where needed (browser, SQL, Kafka, MQTT)

- Custom K6 binary built when using non-HTTP workflows

- Ready for CI/CD with clear exit criteria and thresholds

Debugging & Validation

- Tested locally with –http-debug or conditional console.log

- Response validation checks in place

- Script verified with small/local load before cloud or large-scale runs

Conclusion

In this blog, we took a closer look at the advanced side of K6, going well beyond simple load-testing scripts. We built test setups that scale easily and stay modular, making them reusable across different scenarios. We also explored how to add dynamic data, use conditional logic, and change up user journeys so tests feel a lot more like real-world traffic. On top of that, we looked at creating custom metrics and thresholds for deeper insights, plugging K6 into CI/CD pipelines for automatic checks, extending it with xk6 modules, handling different environments, and keeping sensitive data safe.

In the end, K6 proves it can do a lot more than simple load testing. With the right approach, you can mirror how real users behave, track the numbers that actually matter, and catch issues before they ever slow things down. It’s less about writing longer scripts and more about building smarter ones that grow with your app. Used this way, K6 isn’t just another tool you run it becomes part of how you deliver fast, reliable experiences.

Witness how our meticulous approach and cutting-edge solutions elevated quality and performance to new heights. Begin your journey into the world of software testing excellence. To know more refer to Tools & Technologies & QA Services.

If you would like to learn more about the awesome services we provide, be sure to reach out.

Happy Testing 🙂