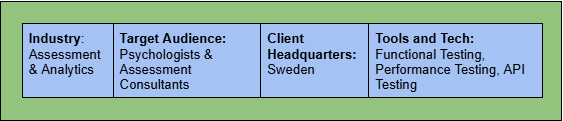

Enhancing Reliability and Performance of a Global Neuroscience Assessment & Analytics Platform

Client Overview

In today’s data-driven and assessment-focused digital landscape, organizations increasingly depend on secure and scalable psychometric platforms to conduct evaluations, collect user data, and derive actionable insights. Ensuring functional accuracy, performance stability, and consistent user experience across browsers and regions is critical, particularly when assessments are time-bound and data-sensitive.

The client, a global assessment and analytics service provider, operates a web-based psychometric platform used to administer digital tests, manage administrative workflows, and generate analytical reports. The platform integrates assessment engines, REST-based services, multilingual content support, and reporting modules to deliver reliable and standardized evaluation experiences across geographies.

Goals

- Ensure stable and accurate end-to-end execution of digital assessment workflows

- Validate cross-browser compatibility across modern web browsers

- Assess system performance and responsiveness under concurrent user load

- Ensure reliability of REST API integrations supporting assessments and reporting

- Improve overall platform usability, stability, and production readiness

Challenges

1. Complex Assessment Workflows

Psychometric tests involved multiple steps such as instructions, timed questions, validations, submissions, and result generation. Any functional defect could directly impact test integrity.

2. Multi-Language Support

The platform supported multiple languages, increasing the risk of content mismatches, UI misalignment, and incorrect data rendering.

3. Cross-Browser Consistency

Ensuring identical behavior across Chrome, Firefox, Edge, and Safari on Windows required extensive compatibility validation.

4. Concurrent Usage Load

The platform needed to handle multiple users attempting assessments simultaneously without performance degradation.

Solution

A structured QA strategy was implemented combining functional, cross-browser, API, and performance testing.

Functional Testing

- Designed detailed test scenarios covering:

- Test-taker flows (login, assessment start, question navigation, submission)

- Admin workflows (test configuration, user management, report access)

- Validated business rules, input validations, and edge cases

- Identified usability gaps and provided improvement suggestions

Cross-Browser Testing

- Executed test cases across:

- Chrome, Firefox, Edge, and Safari on Windows

- Verified:

- UI alignment and responsiveness

- JavaScript behavior consistency

- Form handling and navigation stability

API Testing

- Validated REST APIs involved in:

- Assessment submission

- Result calculation

- Data persistence

- Ensured response accuracy, status codes, and data integrity

Performance Testing

- Designed performance scenarios using JMeter

- Simulated concurrent test-taking by up to 20 users

- Measured:

- Response time

- System stability

- Failure rates under load

- Identified performance bottlenecks and shared optimization recommendations

Defect Management & Reporting

- Documented functional and performance defects in Excel with:

- Clear reproduction steps

- Expected vs actual behavior

- Severity and impact

- Shared consolidated reports with development teams for faster resolution

Business Benefits & Impact

1. Reduced Production Risk and Business Disruption

By validating end-to-end assessment workflows and critical user journeys before release, the platform significantly reduced the risk of assessment failures in production. This ensured uninterrupted test execution for end users, preventing reputational damage and potential loss of client trust caused by incomplete or invalid assessments.

2. Improved Platform Reliability During Peak Usage

Performance testing under concurrent user load validated the platform’s ability to handle simultaneous test-takers without degradation. This increased confidence in system stability during peak assessment windows, enabling the business to onboard more users and run parallel assessment campaigns without operational risk.

3. Faster Release Cycles with Higher Confidence

Early identification of functional, cross-browser, and API-level issues reduced last-minute fixes and release rollbacks. As a result, stakeholders were able to approve releases faster with higher confidence, improving overall delivery timelines and reducing dependency on post-release hotfixes.

4. Enhanced User Experience and Assessment Credibility

Cross-browser and multilingual validation ensured consistent behavior, content accuracy, and UI rendering across regions. This directly improved test-taker experience and reinforced the credibility of the assessment process, which is critical for psychometric and evaluation-based platforms.

5. Better Data Accuracy and Reporting Integrity

API and functional validations ensured that user responses, scoring logic, and analytical reports were processed and stored accurately. This safeguarded data quality and improved the reliability of downloadable reports, enabling business users and clients to make informed, data-driven decisions.

6. Increased Scalability and Market Readiness

The structured QA approach validated the platform’s readiness for regional expansion and increased user volumes. With proven performance benchmarks and stability, the business was able to confidently scale platform usage across geographies without compromising quality or compliance.

7. Improved Stakeholder Visibility and Decision-Making

Detailed defect reporting and performance insights provided stakeholders with clear visibility into platform health, risks, and improvement areas. This transparency enabled better prioritization, informed technical decisions, and more predictable planning for future enhancements.

Conclusion

The QA engagement ensured the assessment and analytics platform met quality, performance, and usability expectations before release. By applying a layered testing approach and realistic performance simulations, the platform was validated for real-world usage scenarios. The outcome was a stable, scalable, and user-ready system capable of supporting concurrent assessments with confidence.

Witness how our meticulous approach and cutting-edge solutions elevated quality and performance to new heights. Begin your journey into the world of software testing excellence. To know more refer to Tools & Technologies & QA Services.

If you would like to learn more about the awesome services we provide, be sure to reach out.

Happy Testing 🙂