For the better part of two decades, test automation was the answer QA teams reached for whenever the question was about speed, consistency, or scale. It slashed regression timelines, reduced the dependency on repetitive manual effort, and gave delivery teams the confidence they needed inside Agile and DevOps cadences. For a long time, that was enough.

But here is where things stand today. The software being built is genuinely more complex than anything automation frameworks were originally designed to handle. Microservices architectures, real-time data flows, AI-powered features, and globally distributed systems have introduced a level of variability that pre-scripted test suites cannot keep pace with. The old model is not broken. It has simply reached its ceiling.

- The Core Problem: QA Processes Are Still Static in a Dynamic World

- AI and Automation: A Complementary Relationship

- Embedding AI Across the QA Lifecycle

- 1. Requirement Analysis and Early Risk Identification

- 2. Test Design: Compressing Days into Hours

- 3. AI-Assisted Test Case Generation: What It Looks Like in Practice

- 4. Automation Framework Design with AI Assistance

- 5. Intelligent Test Execution: Self-Healing and Risk-Based Selection

- 6. CI/CD Integration and Continuous Testing Strategy

- 7. Root Cause Analysis and Defect Management

- 8. Production Monitoring and the Feedback Loop

- Real-World Impact Across Industries

- From Execution to Intelligence: The QA Mindset Shift

- Building an AI-Enabled QA Process: A Practical Framework

- Measuring the Impact of AI-Driven Quality Engineering

- Challenges in Adopting AI in QA, and How to Navigate Them

- How Jignect Enables AI-Driven Quality Engineering

- The Future of QA: Predictive, Autonomous, and Business-Aligned

- Conclusion: The Right Time to Make the Move

What has been missing is intelligence. Not more tests or faster execution, but the ability to interpret context, adapt to changing application behaviour, and make proactive quality decisions rather than reactive ones. That is the space AI-driven quality engineering occupies.

Automation executes. AI decides. This distinction defines the next generation of quality engineering, and it is reshaping how enterprise QA teams think about their role in the delivery process.

At Jignect, this shift did not begin as a technology decision. As we detailed in our earlier blog on the journey from automation-first to AI-first quality engineering, the real trigger was discovering that automation had solved the execution problem but left the reasoning problem largely untouched. Our engineers were still spending significant time performing the same types of analysis across projects, and that cognitive overhead was the real constraint on scale.

This blog picks up where that journey leaves off. It explores how AI integrates across every stage of the QA lifecycle, what it looks like in practice inside a real delivery environment, and what enterprise QA teams need to do to make the transition successfully. If you want the story of how Jignect arrived at AI-first quality engineering, that foundation is covered in the earlier piece. What follows here is about what the capability looks like when it is fully operational, and how to build it.

Related Reading: From Automation-First to AI-First Quality Engineering: Jignect’s Journey

Read about how Jignect built its QA Prompt Library, the phases of transition from manual QA to automation to AI-first reasoning, and the cultural shifts that made it possible.

The Core Problem: QA Processes Are Still Static in a Dynamic World

Most QA teams, even highly automated ones, are operating on fundamentally static processes. Test suites are written once and rarely revisited unless something breaks. Prioritisation is driven by habit rather than any real analysis of where risk lives in the codebase. Defect triage happens after a build has already failed. Coverage decisions are made by experienced engineers working from memory and intuition, not live data.

This is not a criticism of those teams. It is a reflection of what automation alone can deliver. The problem becomes most visible at scale.

The test automation challenges that compound as organisations grow tend to follow a predictable pattern:

- Test maintenance consumes more QA capacity than teams budget for. Scripts go stale as applications evolve and keeping them current starts to compete with writing new coverage.

- Coverage gaps remain invisible until something escapes to production. There is no reliable mechanism for knowing what is not being tested.

- Regression pipelines that were built to accelerate delivery start creating bottlenecks instead, with long execution times and slow triage cycles.

- Scaling automation horizontally amplifies the noise without adding context. More tests produce more results to interpret, but the interpretation work remains just as manual as before.

The outcome is QA that is technically automated but strategically reactive. Teams move faster on paper but still depend on human judgment for every decision that matters. For organisations operating in regulated or high-velocity sectors, that gap is no longer acceptable.

AI-driven quality engineering addresses the root cause rather than the symptoms. It is not about running more tests. It is about building a quality system that is adaptive, predictive, and continuously learning.

AI and Automation: A Complementary Relationship

A concern that surfaces regularly when QA leaders evaluate AI adoption is whether it will make their existing automation investment obsolete. In practice, the opposite is true. AI derives its value from the infrastructure that automation provides. Without reliable pipelines, CI/CD integrations, and scripted test coverage, an AI layer has nothing meaningful to work from.

Think of it this way: automation is the engine. AI is the navigation system sitting on top of it. The engine runs the same way regardless. The navigation layer decides what route to take, what to prioritise, when to slow down, and when to escalate. Both are necessary. Neither is sufficient on its own.

Intelligent test automation is not a replacement strategy. It is an amplification strategy. AI makes the automation investment your team has already built smarter, faster, and more aligned to the business risks that actually matter.

The QA transformation that AI enables moves through recognisable stages. Most enterprise teams are already in Stage 1: automated execution, reduced manual effort, reliable regression coverage. AI-driven quality engineering is the structured path to Stage 2, where prioritisation, execution, and interpretation all become data-driven rather than intuition-driven. Stage 3 is where the system self-heals, self-prioritises, and surfaces risk proactively, without waiting for human direction.

Moving from Stage 1 to Stage 2 does not require starting from scratch. It requires enriching what already exists with an intelligence layer built on real execution data and contextual decision-making. That is precisely the model Jignect has been developing across its delivery practice.

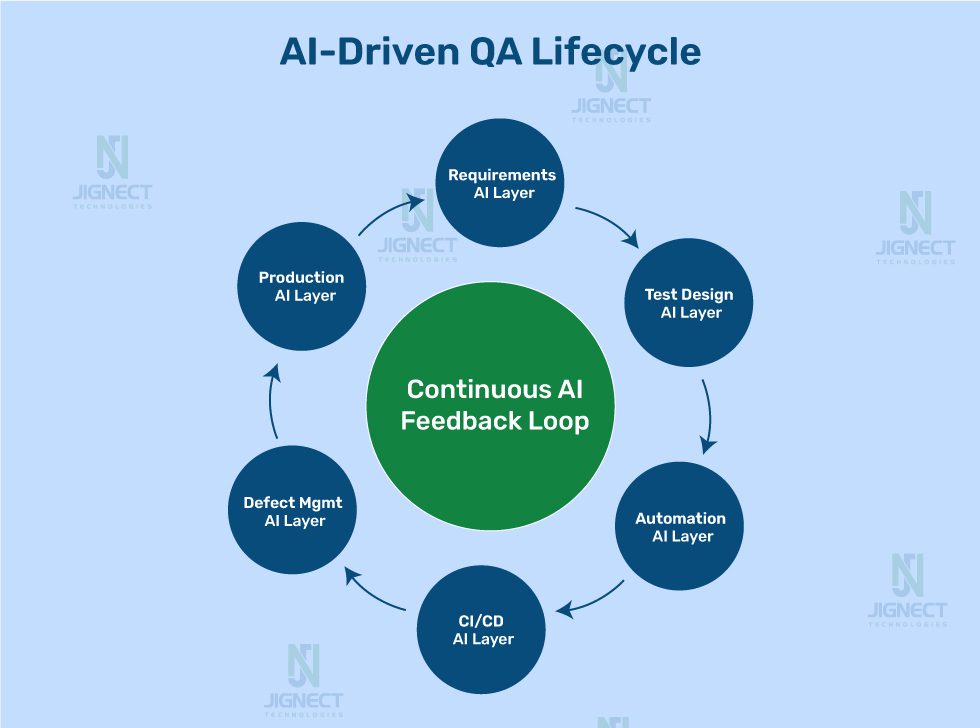

Embedding AI Across the QA Lifecycle

The practical value of AI in software testing becomes clearest when you examine what it contributes at each stage of the QA lifecycle. The impact is not uniform. Some stages see dramatic time compression. Others see qualitative improvements in the decisions being made. Together the effect compounds, and each layer of intelligence makes the next one more effective.

1. Requirement Analysis and Early Risk Identification

Traditional QA involvement starts at test design. AI-driven quality engineering starts earlier, at requirements. By analysing user stories, acceptance criteria, and business specifications, AI can identify ambiguities, conflicting requirements, and coverage gaps before a single line of code is written.

At Jignect, this is where the QA Prompt Library delivers some of its most immediate value. Our Requirement Analysis prompts guide the AI to evaluate functional flows, edge cases, error handling, data validation, security considerations, and integration risks across a structured set of dimensions. Crucially, the output is not treated as a final analysis. It is a reasoning accelerator that allows engineers to explore a broader scenario space more quickly than they could working from a blank page.

The practical impact of this approach is that critical risks surface earlier. In a FinTech context, for example, a requirement for a fund transfer feature might clearly describe the happy path but leave transfer limits, network interruption behaviour, and duplicate request handling undefined. An AI-assisted review catches those gaps during specification, not during regression, which is a significant difference in cost and timeline.

How Jignect applies this in practice

Jignect’s Requirement Analysis prompt instructs the AI to think as a senior QA engineer would, covering functional flows, boundary conditions, negative scenarios, integration risks, security considerations, concurrency risks, and observability needs. Engineers then extend the AI output with system-specific context before test design begins. This approach has consistently improved the quality of early QA involvement during feature planning across our delivery engagements.

2. Test Design: Compressing Days into Hours

Test case creation is one of the most time-intensive parts of the QA process. Writing comprehensive scenarios for a complex feature, covering positive, negative, boundary, and edge cases with traceability to requirements, can take a senior QA engineer several days per feature cycle depending on scope.

AI in software testing compresses this substantially. By analysing requirement documents, existing test repositories, defect history, and application context, AI-assisted generation produces an initial test suite in hours. These are not generic placeholders. They are contextually relevant, risk-weighted cases aligned to the actual behaviour of the system under test.

A SaaS company running two-week sprints reduced test case creation time from an average of three days per feature cycle to under four hours. Senior QA engineers reclaimed that time for complex exploratory testing and quality strategy work that AI cannot do for them.

The benefit compounds as the model accumulates sprint history. Test generation becomes progressively more targeted as it learns from execution results and defect patterns across cycles.

3. AI-Assisted Test Case Generation: What It Looks Like in Practice

This is where most discussions about AI in QA remain abstract, so it is worth making the implementation concrete. The workflow Jignect uses for AI-assisted test case generation on real features follows four steps.

- Feed structured context into the AI model. This includes the user story, acceptance criteria, relevant API contracts, and a brief summary of the module’s known defect patterns from previous cycles.

- Prompt the AI to generate a risk-weighted test case matrix covering positive, negative, boundary, and integration scenarios, with each case including ID, title, preconditions, test steps, expected result, and risk tier.

- A QA engineer reviews the output, adjusts for environment-specific constraints, removes duplicates, and tags cases by automation priority.

- Approved cases are pushed directly into the test management system and linked to the relevant automation scripts.

Below is an example of the prompt structure Jignect uses for this workflow. It is an extension of the test case drafting prompts from our QA Prompt Library, adapted specifically for the risk-weighted output format our teams need.

JIGNECT QA PROMPT: AI-Assisted Test Case Generation

Act as an experienced QA engineer responsible for creating comprehensive

test coverage for the feature described below.

Context:

Feature: Guest checkout with saved address auto-fill

User Story: As a returning guest user, I want my previously used

shipping address suggested at checkout so I can complete my purchase faster.

Acceptance Criteria:

- Address shown if user email matches an existing order record

- User can accept the suggestion or enter a new address

- Pre-populated fields remain editable

- No suggestion shown if no prior order address exists

Known defect history: Address pre-fill fails when postal code

contains spaces (reported v2.3, closed v2.4)

Guidelines:

- Cover positive, negative, boundary, and integration scenarios

- Do not duplicate scenarios

- Prioritise by risk level

Output format for each test case:

Test Case ID | Title | Priority (High/Medium/Low)

Test Type (Functional / Validation / Negative / Edge / Regression

Preconditions | Steps | Expected Result

Generate:

1. Happy path scenarios (3-5 cases)

2. Negative / invalid input scenarios (4-6 cases)

3. Boundary and edge cases (3-4 cases)

4. Integration scenarios with the order history API (2-3 cases)

5. Regression case for the v2.3 postal code defect

A well-configured model with access to the test repository and code context produces fifteen to twenty structured test cases from this prompt in a matter of minutes. The QA engineer’s job then shifts from writing scenarios from scratch to reviewing, validating, and extending the output with system-specific knowledge that the model cannot derive from the requirement document alone.

This is a meaningful change in how QA capacity is spent. The volume of coverage does not shrink. The engineering time spent on repetitive documentation does.

4. Automation Framework Design with AI Assistance

The Jignect QA Prompt Library extends beyond functional testing into automation engineering itself. One of the patterns our architects observed early on was that automation framework design for new projects followed recognisable structural principles regardless of the application domain. The same layered architecture questions, the same decisions about Page Object structure, Data Factory design, configuration management, and utility layer organisation, came up on every engagement.

Rather than rebuilding this reasoning from scratch for each project, Jignect developed automation architecture prompts that generate a baseline framework design based on the technology stack of the system under test. The output covers recommended testing layers, framework architecture principles, folder structure, and CI/CD integration guidance. Engineers then adapt this scaffold to project-specific requirements.

The same principle applies to Page Object class generation. When a new module is being brought under automation coverage, providing the AI with the HTML structure, the interaction patterns, and the framework conventions already in use produces a well-structured Page Object class in minutes rather than hours. The engineer reviews it, adds any missing selectors, and validates it against the live application.

Jignect automation framework architecture

Jignect’s automation frameworks use a layered design pattern that separates test logic, UI interaction, and data management. The foundation is the Page Object Model for UI isolation, extended with Data Objects for structured test entities, a Data Factory pattern for dynamic test data generation, and a centralised Utility Layer for shared helper methods. AI is used to scaffold new instances of this architecture for each engagement, ensuring structural consistency across projects while reducing setup time.

5. Intelligent Test Execution: Self-Healing and Risk-Based Selection

Once the test suite exists, the next set of AI capabilities centres on how tests are executed. Two capabilities in particular have the most direct impact on engineering productivity.

Self-healing automation addresses the maintenance overhead that consumes a disproportionate share of QA bandwidth in active development environments. When UI locators break because a button is renamed or a field is repositioned, self-healing AI detects the change contextually and updates the selector automatically based on semantic understanding of the element. The failure that would previously have required a QA engineer to diagnose, update, and verify is resolved without manual intervention. In large suites, this capability alone can recover thirty to forty percent of QA bandwidth that was previously absorbed by maintenance.

Risk-based test selection addresses the other major inefficiency: running the full test suite on every build regardless of what changed. An AI model that analyses the code delta and evaluates which test cases are most likely to surface regressions given that specific change can construct an optimised execution set for each build. The full suite still runs on a scheduled cadence. The critical-path feedback loop compresses from hours to minutes.

A healthcare platform managing patient appointment workflows had a regression suite of approximately 4,200 automated tests. Every pipeline run executed the full suite, averaging three hours and twenty minutes. After implementing AI-driven risk-based test selection, the average feedback time for standard feature changes dropped to forty-five minutes. Defect detection rate remained consistent. Developer wait time fell by seventy-eight percent.

6. CI/CD Integration and Continuous Testing Strategy

Modern software delivery depends on CI/CD pipelines making fast, reliable promotion decisions. Traditional quality gates give a binary answer. Either everything passed or something failed. An AI-driven continuous testing strategy replaces that binary model with probability-weighted quality assessment.

What this means practically is that the pipeline can make nuanced decisions. A change touching low-risk, well-tested utility functions gets a high confidence score and promotes quickly. A change that modifies a historically brittle integration point, even if all current tests pass, gets flagged for extended review before it moves forward. The AI is not overriding the automation results. It is contextualising them against everything it knows about that code path’s behaviour history.

For an E-commerce platform running eight to twelve deployments per day across multiple services, this level of intelligence transforms the relationship between QA and release velocity. The pipeline moves faster because it is making smarter decisions, not because quality checks are being bypassed.

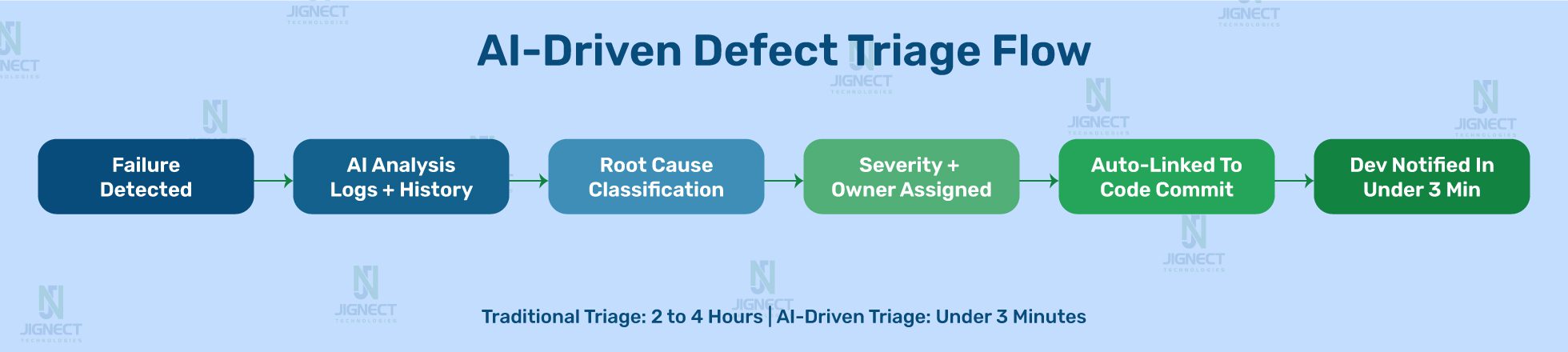

7. Root Cause Analysis and Defect Management

Defect management in most QA processes is reactive by design. A test fails, a ticket gets created, it is triaged, assigned, and resolved. The workflow is linear and creates predictable bottlenecks at every handoff.

AI changes the economics of this process in two directions. On the reactive side, AI-assisted triage automatically classifies defects by severity, probable root cause, affected component, and suggested ownership. What typically takes a QA lead two to three hours to work through in a triage meeting is handled in minutes. The AI correlates the failure against commit history, related test results, performance data, and deployment logs to produce a structured diagnosis the developer can act on immediately.

At Jignect, root cause analysis is one of the most actively used prompt categories in our library. When an automation failure occurs, our engineers use structured RCA prompts that provide the AI with the failure log, the relevant test code, the stack trace, and a summary of recent deployments. The prompt asks the model to distinguish between three possible failure categories: product defect, automation instability, or environment issue. This three-way classification is something that used to require an experienced senior engineer and often took hours. With a well-constructed prompt and relevant context, it takes minutes, and the engineer’s job becomes validating the diagnosis rather than constructing it.

How Jignect uses AI for Root Cause Analysis

Jignect’s RCA prompts provide the AI with failure logs, test code, stack traces, and recent deployment context. The model is asked to classify the failure as a product defect, automation instability, or environment issue, explain the likely root cause, identify the relevant component and code path, and suggest the next diagnostic step. Engineers validate and extend the output rather than building the analysis from scratch. This approach has significantly reduced the time from failure detection to actionable assignment across our delivery engagements.

8. Production Monitoring and the Feedback Loop

The richest source of quality signal available to any QA team is the production environment. Most traditional QA processes treat production as out of scope, handed off to operations or monitoring teams once release is done. AI-driven quality engineering dissolves that boundary.

Production anomaly detection, identifying unusual error rate spikes, performance degradations, or user experience regressions, feeds directly back into the test suite. When a category of production failure is detected, AI can automatically generate test cases to cover that scenario in future cycles. The test suite evolves based on real-world evidence rather than sitting static between manual review cycles.

For a SaaS platform with a distributed global user base, this feedback loop means quality does not plateau at release time. It improves continuously, with each production cycle making the next one safer.

Real-World Impact Across Industries

The value of AI-driven quality engineering is consistent across industries, but the specific problems it solves vary by sector. Here is how the impact manifests in four domains where Jignect operates.

1. FinTech

Financial technology platforms face two simultaneous pressures that are fundamentally in tension under traditional QA models. The market demands rapid feature delivery. Compliance frameworks demand verifiable, zero-defect precision in regulated code paths. Speed and thoroughness usually trade off against each other.

AI-driven quality engineering resolves this by separating the two concerns. AI handles high-frequency, well-defined regression coverage with speed and consistency. Human QA engineering focuses on the compliance-critical, context-heavy scenarios that require genuine domain judgment. A payment platform running four-day regression cycles before AI integration brought that down to under twelve hours while expanding coverage of compliance-sensitive transaction paths by thirty-five percent. Both goals were served without trading one off against the other.

The Jignect Requirement Gap Analysis prompt is particularly valuable in FinTech contexts, where regulatory requirements interact with feature specifications in ways that are easy to overlook during development. Raising these interactions during requirement review rather than during regression testing is a significant shift in the cost curve.

2. Healthcare

Healthcare applications carry quality implications that extend well beyond user inconvenience. When software handles patient records, clinical decisions, or appointment scheduling, a defect is a potential patient safety issue. The regulatory frameworks governing this space, HIPAA and FDA 21 CFR Part 11 among them, add documentation and validation requirements on top of the technical quality bar.

AI-driven quality engineering addresses both the complexity and the compliance overhead. Test documentation aligned to validation protocols is generated automatically. Audit trails are maintained comprehensively without additional manual effort. Risk-based coverage models map directly to regulatory requirement categories rather than relying on engineer judgment to make those connections manually.

One healthcare team migrating from a legacy EHR system to a cloud-based platform used AI-assisted test generation to produce a full validation test suite in three weeks. The same effort had been scoped at four months using traditional approaches. Traceability and compliance coverage were preserved throughout.

3. E-commerce

E-commerce QA operates under a particular kind of pressure that other industries do not feel as acutely. A broken checkout flow during peak traffic is not an abstract quality metric. It is a direct, measurable revenue event.

Risk-based test selection ensures that every build exercises the highest-revenue-impact flows first. Visual AI testing detects UI regressions across device and browser combinations at a speed no manual process can match. Production anomaly detection catches performance degradation before it escalates into a user-visible failure. A retail platform that implemented these capabilities ahead of its peak trading season reduced critical production failures by over sixty percent compared to the previous year, with no increase in QA headcount.

4. SaaS

SaaS products are defined by their release cadence. Customers expect continuous improvement, and competitive markets punish teams that ship slowly. The pressure to maintain daily or multiple-weekly deployments while keeping quality high is the central tension of SaaS QA.

Scalable QA solutions built on AI address this directly. Self-healing automation keeps test suites current with a rapidly evolving product without the maintenance overhead that traditionally eats QA capacity. AI-generated test cases keep pace with feature development rather than lagging behind it. Intelligent quality gates make release decisions based on contextual risk, so the pipeline does not become the bottleneck.

A SaaS business running three production releases per week reduced QA-related release delays by seventy percent after fully integrating AI-driven capabilities, while maintaining comprehensive regression coverage across all active release branches.

From Execution to Intelligence: The QA Mindset Shift

Getting the technology right is only part of the transition to AI-driven quality engineering. The more difficult part, in most organisations, is the shift in how QA teams understand their own purpose.

Traditional QA identity is built around finding defects. The measure of a good QA team is how many bugs they catch before release. That framing, while intuitive, limits the strategic value QA can contribute. AI-driven quality engineering expands that ceiling significantly, but only if teams are oriented toward a different goal.

The most significant barrier to AI-driven quality engineering is not technology. It is the assumption that QA’s job is to find bugs. The real job of AI-driven QA is to prevent them, and to give the organisation a continuous, accurate view of quality risk so that every release decision is an informed one.

At Jignect, this shift showed up clearly during our own AI-first transition. The concern among QA engineers was not that AI would take over testing. It was about what their role would look like when the repetitive reasoning tasks were absorbed. The answer that emerged from practice was that the work became more interesting, not less. Engineers spent more time on complex system risks, novel business scenarios, and quality strategy, and less time on documentation and regression maintenance.

Three dimensions of this mindset shift have practical implications for how QA teams are structured and measured:

- Quality moves from a delivery phase to a continuous system. It is not a final checkpoint before release. It is a persistent process that monitors, learns, and signals risk across the entire development lifecycle.

- QA metrics evolve from execution outputs to business outcomes. Tests run and pass rate give way to defect escape rate, risk coverage confidence, and deployment reliability as the primary indicators of QA health.

- QA engineers evolve from test executors to quality intelligence analysts. The work becomes interpretation, strategy, exploratory investigation, and AI governance rather than script writing and regression execution.

Building an AI-Enabled QA Process: A Practical Framework

For QA Managers and Directors evaluating how to implement AI in QA processes, the most effective approach is structured and incremental. The following five steps reflect how successful AI-in-QA adoptions actually unfold.

Step 1: Assess and Baseline

Before introducing AI, establish a clear performance baseline: test coverage, defect escape rates, pipeline duration, maintenance overhead, and release confidence. Without this baseline, measuring the impact of AI adoption is guesswork. The baseline also reveals where AI will deliver the most immediate value.

Step 2: Identify High-Value Integration Points

Not every stage of your QA lifecycle has equal readiness for AI integration. Start with the pain points that are most visible and most costly. For many teams this is test maintenance overhead. For others it is the slow feedback loop in CI/CD, or a defect escape rate that keeps climbing despite larger test suites. Start there.

Step 3: Build Your Quality Data Foundation

AI performs in proportion to the quality of the data it learns from. Fragmented test repositories, inconsistently tagged defects, and execution data spread across disconnected tools will constrain AI performance regardless of which capabilities are introduced. Data consolidation and consistent reporting structure are not optional groundwork. They are the foundation.

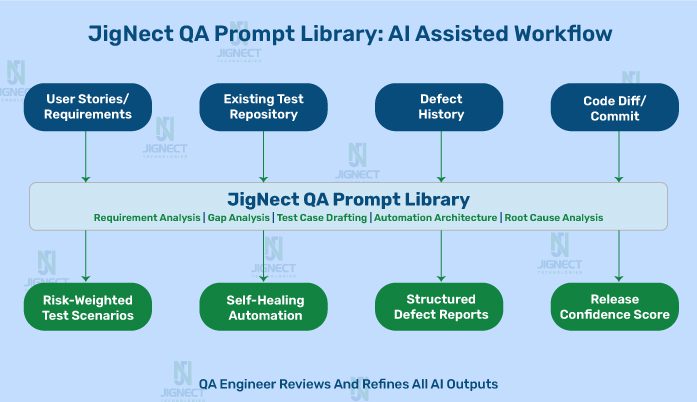

Step 4: Formalise Institutional Knowledge into Prompt Structures

One of the most underutilised aspects of AI adoption in QA is the opportunity to codify institutional knowledge. Every experienced QA team has accumulated years of domain-specific reasoning about how to analyse requirements, design test architecture, and investigate failures.

When that reasoning is captured in well-structured prompt templates, it becomes reusable, consistent, and transferable across the team. Jignect’s QA Prompt Library is exactly this: an organised system for making the reasoning of senior engineers available to every member of the team, on every project.

Step 5: Evolve Roles, Governance, and Metrics Together

As AI absorbs execution-level tasks, QA roles need to evolve toward quality engineering, AI model governance, exploratory testing, and strategic risk analysis. Governance is important here: AI outputs need human review and validation before they become test artefacts. This is not a formality. It is how the system maintains quality over time. At Jignect, every AI-generated output in our QA workflow is reviewed by an engineer before it moves forward, with accountability structures that ensure the quality of AI inputs is maintained.

Measuring the Impact of AI-Driven Quality Engineering

The most common question from QA leadership evaluating AI investment is straightforward: how do we know it is working? The answer requires a metrics model that goes beyond traditional QA reporting.

| Traditional Automation | AI-Driven Quality Engineering |

| Test creation: 3 to 5 days per feature cycle | AI-assisted test creation: under 4 hours per feature cycle |

| Regression cycle: 3 to 5 days full suite | Intelligent regression: 6 to 12 hours with risk-based selection |

| Test maintenance: 30 to 40% of QA bandwidth | Self-healing automation: maintenance under 10% of capacity |

| Defect triage: 2 to 4 hours of manual investigation | AI triage: under 3 minutes with root cause context and auto-assignment |

| Coverage decisions: experience and intuition | Coverage decisions: risk model trained on real execution data |

| Quality gate: binary pass or fail | Quality gate: probability-weighted, risk-contextual assessment |

| QA role: execution and maintenance focused | QA role: quality intelligence, governance, and strategy |

Beyond the operational metrics, the business outcomes tell the fuller story. Reduced cost of quality. Faster time to market for features that require significant QA coverage. Lower production defect rates that translate into reduced support load and stronger customer retention. These are the numbers that resonate with C-suite stakeholders and justify sustained investment in quality engineering services.

Challenges in Adopting AI in QA, and How to Navigate Them

The benefits of AI in software testing are well evidenced, but adoption is rarely frictionless. The teams that navigate this transition most successfully tend to anticipate the challenges rather than encounter them reactively.

Data quality and availability

AI models require structured, consistent, and historically rich data to produce meaningful results. Most QA organisations have the data in principle but not in practice. It exists across multiple tools with inconsistent tagging and incomplete history. Addressing this is upstream of any AI capability introduction. Teams that invest in data infrastructure first consistently see better AI outcomes than those who try to run AI on poorly organised data.

Organisational change and team readiness

QA engineers who have built expertise in manual testing and automation scripting are right to ask what their role looks like in an AI-driven model. That question needs a clear answer, communicated early and backed by concrete upskilling plans. At Jignect, the transition required active investment in training engineers to write effective prompts, interpret AI outputs critically, and govern the quality of AI-generated artefacts. Teams that understood they were being empowered became advocates. Those left without clear direction became resistors.

Prompt quality and output governance

One lesson Jignect learned early is that the quality of AI output is directly proportional to the quality of the prompt. Generic prompts produce generic results. Structured prompts with clear context, explicit constraints, and defined output formats produce results that QA engineers can work with immediately. Building a shared prompt library that captures best-practice prompt structures is not a nice-to-have. It is the mechanism that turns AI from an individual experiment into a team-level capability.

Integration with legacy QA infrastructure

Enterprises running QA on frameworks built five or ten years ago will encounter integration complexity. Introducing AI capabilities often requires API abstraction layers, data pipeline work, and phased migration of test assets. This is solvable with realistic planning and a partner who has worked through the same challenge in comparable environments.

How Jignect Enables AI-Driven Quality Engineering

Jignect’s approach to AI-driven quality engineering is grounded in a principle that emerged from our own internal transition: process transformation has to come before technology introduction. We have seen AI adoption efforts fail because the intelligence layer was introduced before the process was ready to support it. Sequence matters.

Every client engagement begins with a QA maturity assessment that maps the current state across people, process, tooling, and data. From that baseline, we design a structured QA transformation roadmap that identifies the AI integration points with the highest ROI for that specific context. The roadmap is not generic. It reflects the product, the industry, the delivery cadence, and the team’s current capability level.

The QA Prompt Library model that Jignect developed internally is now a core part of how we deliver AI-driven quality engineering for clients. We do not just introduce AI capabilities. We work with client QA teams to build their own prompt libraries that capture their institutional knowledge in a form that is reusable, governable, and improvable over time. That library becomes the team’s long-term AI asset, independent of any specific tool or platform.

Our quality engineering services span the full AI-in-QA lifecycle: requirement analysis and risk modelling, intelligent test design, automation framework scaffolding, CI/CD integration, production monitoring, and feedback loop management. We co-build a tailored AI-enabled QA process that the client team owns and operates. Knowledge transfer is built into every engagement. The goal is capability, not dependency.

Jignect measures the success of every AI-driven quality engineering engagement by three things: your defect escape rate trend, your release velocity, and your team’s ability to make confident, data-driven quality decisions. Not by the sophistication of the technology deployed.

The Future of QA: Predictive, Autonomous, and Business-Aligned

The trajectory of AI-driven quality engineering is already visible in the organisations furthest along the adoption curve. What they are building today gives a clear picture of where the discipline is heading.

Predictive testing is the near-term frontier: systems that assess quality risk at the code-change level before any tests are executed, drawing on static analysis, historical defect patterns, dependency mapping, and team velocity signals. A developer pushing a commit gets a risk profile of that change before the pipeline even starts. This enables genuinely intelligent decisions about when to run extended testing and when a standard quality gate is sufficient.

Autonomous quality agents are the medium-term reality: AI systems that manage the full test lifecycle for high-frequency, well-defined scenarios without human intervention at each step. QA engineers concentrate their time on the scenarios that require human judgment: novel business scenarios, complex integration behaviours, and strategic quality governance.

Further out, the boundary between quality engineering and product intelligence will dissolve. Quality data from testing, production monitoring, and user behaviour analytics will feed directly into product strategy and architecture decisions. Quality will not be a function that validates software. It will be an intelligence capability that continuously informs how software is built.

For QA leaders making investment decisions today, the practical question is not whether to move in this direction. It is how quickly a solid foundation can be built, in data infrastructure, process maturity, and team capability, to fully leverage where AI-driven quality engineering is going.

Conclusion: The Right Time to Make the Move

Automation changed quality assurance a generation ago. It replaced a fragmented, manually dependent process with something consistent, repeatable, and scalable. That was a genuine transformation, and teams that adopted it early built a meaningful advantage.

The next transformation is happening now. AI-driven quality engineering takes everything that automation built and makes it smarter. Adaptive execution instead of fixed scripts. Predictive risk intelligence instead of reactive defect detection. Institutional knowledge encoded in prompt libraries instead of sitting locked inside individual engineers. Continuous learning instead of static coverage.

The results are not incremental. Test cycles compress from days to hours. Defect escape rates fall measurably. Pipelines make intelligent release decisions. QA teams evolve from executing tests to leading quality strategy. Across FinTech, Healthcare, E-commerce, and SaaS, organisations are building these capabilities today.

The question is not whether AI will redefine quality engineering in your industry. It already is. The question is whether your organisation will be ahead of that shift or responding to it.

Ready to move from automated to intelligent quality engineering? Partner with Jignect to build an AI-driven QA capability that is grounded in your real process, measured against your actual business outcomes, and built to scale with your product. Talk to our quality engineering team and find out where AI can deliver the most immediate impact for your organisation.

Witness how our meticulous approach and cutting-edge solutions elevated quality and performance to new heights. Begin your journey into the world of software testing excellence. To know more refer to Tools & Technologies & QA Services.

If you would like to learn more about the awesome services we provide, be sure to reach out.

Happy Testing 🙂